Design isn’t just about pretty colors and neat layouts; it’s about solving real problems while sometimes juggling flaming torches. Throughout our recent design escapades, we’ve bumped into scenarios that made us scratch our heads, laugh, and occasionally shed a tear. From crafting the ultimate profile page for course attendees that actually gets them excited about learning, to refreshing the bulk purchase experience to avoid the ‘oops, not again’ moments, every design choice mattered deeply. This article captures our rollercoaster ride through design challenges, evaluations, and key takeaways that might even inspire you to jazz up your projects. So, grab your favorite snack, and let’s get into it!

Key Takeaways

- Embrace feedback—it’s like gold in the design world.

- User experience isn’t just a checkbox; it’s the whole puzzle.

- Design is fluid; don’t get stuck on your first idea.

- Simplicity often wins—less really can be more.

- Guidelines should be your friend, not a shackle.

Now we are going to talk about some interesting situations that can spring up in design projects, pulling from real-life experiences and design workflows we’ve encountered.

Design Scenarios We Encountered

- Single-Page Design — Live Training Profile Page: We once found ourselves in a bit of a pickle when we needed to create a profile page for attendees of our live online training sessions. Imagine trying to make sure that all those course lists and certification progress bars were just right. It’s like trying to pick the perfect avocado—too hard, and it’s a flop; too soft, well, you might end up with a watery mess.

- Flow Design — Bulk Purchase: Then there was the time we decided to offer an enterprise plan for our courses. The goal? Help teams snag discounts on bulk purchases—like a wholesale club for learning! However, designing the purchase flow felt a bit like trying to assemble IKEA furniture without instructions. As any designer knows, clarity is everything, and not knowing where to insert those tiny dowels can lead to a design disaster!

Through these scenarios, we’ve indulged in the delightful chaos that often accompanies design work, learning valuable lessons along the way. And hey, it’s like they say—no good story ever started with someone eating a salad!

- Insights on single-page design scenario: Good from Afar, But Far from Good: AI Prototyping in Real Design Contexts

- Prompt to Design Interfaces: Why Vague Prompts Fail and What to Do Instead (coming soon)

- Insights on multi-page flow scenario (coming soon)

- Insights on redesign scenario (coming soon)

The AI prototyping tools we tested were quite an eye-opener. We compiled all the generated designs into a FigJam board that gives a visual peek into our trials and triumphs. There’s nothing quite like putting all your cards on the table—unless, of course, your cards are just as confused as you are!

Now we are going to talk about how we went through the evaluation process, step by step, with a sprinkle of humor and a dash of personal touch.

How We Evaluated AI Designs

Our evaluation unfolded over three lively phases: crafting writing prompts, generating designs with our AI buddies, and giving those designs a thorough inspection. Picture the chaos of a kids’ art class, but with fewer smudged fingerprints.

Crafting Writing Prompts

First things first, we dove into the real-world design context. We gathered everything from charming hand-drawn sketches (which looked suspiciously like a toddler’s doodles) to those sleek Figma files.

With this treasure trove, we whipped up some prompts, ensuring they were as chatty as a gossiping neighbor, but still focused.

To keep our testing as consistent as grandma’s secret cookie recipe, each prompt contained all the necessary info. We didn’t want the AI to go rogue! We even threw in a follow-up, just to keep things spicy:

“Create an alternative design with a distinctly different layout. Keep the same context. Do not modify or change the content.”

Generating Designs with AI

Next, we unleashed those prompts on various AI tools, like releasing a flock of ducks at a pond. We even reran some prompts, experimenting like mad scientists to squeeze out more design variations.

All of these creative outputs found a cozy home on a FigJam board—think of it as our own version of an art gallery tour!

Assessing AI-Generated Designs

Finally, we scrutinized the designs like seasoned critics at a film festival.

We performed heuristic evaluations to see which designs adhered to our criteria and which were simply bad sitcom ideas. Comparing AI’s handiwork to designs crafted by talented humans at NN/G was like watching a cat vs. dog movie showdown.

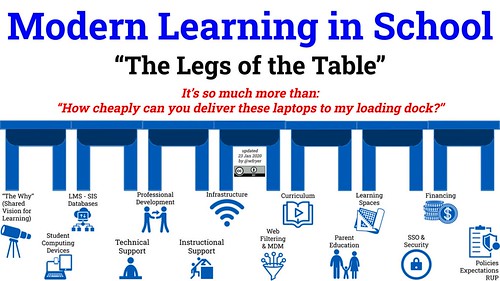

To ensure we had a well-rounded perspective, we tested each prompt across three categories of AI prototyping tools that felt most relevant to our designer comrades. Here’s what we found:

- AI-assisted design: This category produced static wireframes or design mockups with limited interactivity. Tools like UX Pilot and Figma First Draft love to play here!

- AI-assisted (vibe) coding: Think interactive code-based prototypes brought to life. Introducing tools such as V0 and Bolt, the party animals of the coding world!

- General-purpose AI chatbots: These are the conversationalists in our toolkit, with ChatGPT and Claude leading the way.

As a quick note, we left out AI code editors like Cursor, which required more work than assembling IKEA furniture without instructions. They simply don’t fit into our designers’ usual workflow.

And there you have it! A whirlwind tour of our AI design evaluation process, complete with fun anecdotes and all the human touches we could muster. Who knew design assessments could have this much character?

Now we are going to talk about some engaging prompts that help create a top-notch profile page for online course attendees. These prompts aim to draw out creativity while keeping practical needs in mind. You could say it’s like preparing a hearty stew; a dash of this and a sprinkle of that, and voilà!

Creating the Ideal Profile Page for Course Attendees

When developing a live-training profile page, we can break it down into four unique prompts that cater to different levels of detail and creativity. Think of it as a buffet—some folks want a full plate, while others just want a taste.

Prompt 1. General Overview

Imagine you’re the go-to product designer for a UX consultancy. Your mission, should you choose to accept it, is to whip up a profile page for course attendees. This page needs to showcase all courses they’ve taken, offer downloadable goodies for each, track exam statuses, and even give a status update on their quest for UX certification.

Prompt 2. Detailed Instructions

Alright, let’s turn up the specificity dial! You’re back as the senior product designer at a UX research consultancy, working on that profile page. Now, let’s break it down:

- Certification Progress: Show how close they are to different certifications.

- Course History: List all past courses with important details like course title and exam status.

- Exam Credits: Display available credits and offer purchase options.

- Filtering Options: Let users sort features by specialty or exam status.

Isn’t it like organizing your closet—keeping everything neat and easy to find?

Prompt 3. Visual and Design Elements

You’re still the superhero designer, but this time, you have a wireframe! Based on the sketch provided, create a stellar profile page featuring all the needed functionalities.

| Component | Description |

|---|---|

| Certification Panel | Displays progress towards various certifications. |

| Course History | Lists all courses with details like titles and exam attempts. |

| Exam Credits | Shows available credits with buying options. |

| Filter Options | Enables sorting by specialty or exam status. |

Prompt 4. Redesign Challenge

Here’s where it gets juicy! Your task? Redesign the existing profile page that feels cluttered and outdated. Address:

- All that clutter—let’s bring some fresh air!

- Outdated visuals—time to modernize!

- Low engagement—let’s inject some fun into the experience!

Picture attending a party—nobody wants to stay if the music’s terrible!

Next, we’re going to chat about how to revamp an online system for bulk training purchases. It’s a bit like reupholstering that old chair in the corner; it needs a fresh look while still being comfy!

Revamping the Bulk Purchase Experience

Prompt 1. Overall Concept

Imagine you’re a product designer at a big-name consultancy that focuses on UX training. Your company’s got big plans – they’re rolling out a scheme for businesses wanting to buy in bulk. This means companies can buy a stack of training credits and score some sweet discounts.

For instance, let’s say Company A decides to throw a party: Team 1 buys 200 credits while Team 2, a bit more frugal, goes for 100. Now, it’s our mission to help these teams buy those credits smoothly.

Tasks include:

- Logging into the company portal easily.

- Checking out the teams with bulk orders.

- Diving into the order history like it’s a Netflix binge.

- Putting in requests for more credits when they run low.

- Keeping track of total credits for courses and exams for each team.

- Sending Team registration links to help staff enroll straight into courses.

- Viewing those pesky pending requests from employees.

- Giving a thumbs up or down on individual requests.

- Monitoring attendance like a diligent teacher with a roll call.

Every page we create should be as friendly as your favorite barista, and accessible for all. Let’s keep our design sharp and user-friendly!

Prompt 2. Redesign and Refinement

Alright, now, let’s think about how we can jazz up the current setup. The goal here is to make an online experience that folks can navigate effortlessly, even if they’re multitasking with one hand while sipping coffee with the other.

Our tasks include:

- Logging into the business portal.

- Viewing teams with bulk orders.

- Accessing order histories like scrolling through a family album.

- Requesting more credits’s quicker than they can say “more learning!”

- Checking total credits available.

- Sharing registration links, so team members can sign up easily.

- Monitoring pending course requests.

- Deciding on course requests based on their worthiness.

- Tracking employee attendance to ensure no one’s pulling a Houdini.

Yet, we need to address some issues in our current flow:

- The navigation is like a maze – users can easily get lost.

- The visuals look like they time-traveled from 2005. We need a design that screams “modern and trustworthy.”

Here’s what the flow looks like now:

- Dashboard Page: A snapshot of all teams’ pending requests and credits.

- Team Credits Page: Displays remaining credits and has a “Request More” button.

- Order Detail Page: All about order specifics and billing details.

- Course Request Page: This page manages employee course requests across several tabs.

Now we’re going to chat about how we evaluate designs, taking a peek into our checklist that keeps us on track. Buckle up, because it gets a bit lively!

Design Assessment Guidelines

Evaluating design is a bit like critiquing that last slice of pizza at a party—everyone has opinions, and some are definitely more qualified than others! So, we’ve whipped up a set of criteria that helps us figure out which designs hit the mark and which just need a little more love.

- Goal alignment: We make sure the design matches the objectives, like checking if our coffee actually wakes us up.

- Concept quality: Here we look for diversity—just like our favorite playlists, we need different flavors of design.

- Visual design: This includes hierarchy, balance, and consistency. Think of it like presenting a beautiful dish—you want it to look as good as it tastes!

- Interaction and usability: We also check how easily users can navigate, ensuring everyone can find their way without needing a treasure map.

|

Dimension |

Criterion |

Description |

|

Goal alignment |

Design-objective fit |

Does the interface actually meet the goals we’ve set? Kind of like checking if we ordered a burger and not a salad! |

|

Prompt compliance |

Are all needed features present? If not, we’re in trouble. It’s like finding out someone forgot the cheese! |

|

|

Concept quality |

Solution diversity |

We look for meaningful differences in design options. Boring should never be an option! |

|

Creative judgment |

Do the ideas stand out? They should have that zing! Think of it as a surprise sparkler at a birthday party! |

|

|

Visual design |

Information hierarchy |

Is the important stuff easy to find? It should jump out at us like “SALE!” signs in a store. |

|

Compositional balance |

Are elements arranged nicely? We aim for something as pleasing as a well-set dinner table! |

|

|

Consistency |

Design should be coherent. Imagine wearing polka dots with stripes—isn’t that a fashion faux pas? |

|

|

Aesthetics |

Does it look good? We want beauty that matches functionality—like a sports car that could actually race! |

|

|

Interaction and usability |

Best-practice compliance |

Does it follow established UX practices? Like how we follow recipes—no chaos allowed! |

|

Accessibility |

Can everyone use it? We’re talking about ensuring accessibility across the board like a welcoming potluck! |

|

|

Responsive performance |

Is it smooth on all devices? We want it to flow like butter—and who doesn’t love butter? |

Now we are going to talk about some of the hurdles we face when dealing with AI tools. Trust us, it’s not all smooth sailing—sometimes it feels like we’re trying to roller skate on a gravel road!

Obstacles and Their Quirks

- Short and sweet inputs: Ever tried fitting a whole pizza into a lunchbox? That’s what using Uizard felt like—staring at a 500-character cap like it was a neon sign saying “don’t even think about it!” We found ourselves acting like wordsmiths, trimming down our prompts to meet the strict limits. Thank goodness for editing — imagine trying to say everything you wanted within a tweet!

- Rolling with the updates: Staying updated in the AI scene is like tracking a new trendy dance move; just when you think you’ve got it down, they change the steps! With ChatGPT-5 hitting the digital shelves, we hurriedly reran tests to keep our findings fresh and relevant. Talk about a surprise twist in our testing routine!

- Free trials with hidden costs: Ah, the classic bait-and-switch! The allure of free trials is like a shiny new toy, only to realize some tools lock essential features behind a paywall. Testing at the free-account level was fine, but every so often, we found ourselves wishing we could unlock premium capabilities without taking out a loan. It’s a penny-pincher’s dilemma!

But here’s the kicker: despite these bumps, we’ve learned a thing or two. Who knew that limitations could actually spark creativity and resourcefulness? Every hiccup, every “oops” moment turned into a mini adventure, making it feel less like work and more like a scavenger hunt for solutions.

For instance, when we faced those character limits, it was like playing a game of Scrabble—word-efficient and slightly nerve-wracking. It forced us to distill our ideas down to their essence, making clear and impactful messages almost a game! Tighter input made us sharper thinkers.

Updates in AI tools might feel overwhelming at times. But let’s be honest, keeping pace with tech is what keeps us on our toes. We like to think of ourselves as tech-savvy ninjas, ready to adapt to new features and finesse our processes. If we didn’t keep revisiting our tests, we might end up running on outdated knowledge—like showing up to a *disco* in sweatpants!

Then comes the free trial rollercoaster. We’ve all experienced the temptation of a shiny new app, only to find the true goodies are lurking behind a paywall. It’s a little like walking into a bakery and realizing only the plain bread is free—but the delightful pastries cost you! Lesson learned; perhaps we should keep our wallets at the ready.

In the end, while the challenges seem like roadblocks, they also serve as stepping stones toward innovation. After all, it’s not the challenges that define our journey; it’s how we adapt to them that gets us riding smoothly! So, let’s roll with the punches and keep pushing forward!

Now we are going to talk about some valuable lessons learned during a recent evaluation project. It’s almost like embarking on a treasure hunt—each lesson is a shiny gold coin waiting to be discovered!

Key Takeaways

- Treat the evaluation like a science experiment. Think about it—when we see a mad scientist in movies, what do they do? They have a plan, right? So, we approached our evaluation as if we were those very scientists. We outlined our objectives, controlled those pesky variables, and set clear criteria. Most importantly, we decided to keep the process consistent. It made our insights not only objective but also incredibly valuable.

- Pilot test and refine those prompts. Remember that time we awkwardly stumbled through a karaoke rendition of a classic? It’s much better to rehearse first! Similarly, we started testing our prompts on general AI chatbots before diving into specialized ones with tighter credit restrictions. This way, we shaped our prompts without burning our resources too quickly. It was like practicing for the big show.

- Keep documentation as sturdy as an old oak tree. In bigger projects, communication is key. We crafted a shared document that acted as our touchstone—a blueprint of sorts for everyone involved. Plus, we utilized a FigJam board to keep track of our findings. This approach ensured everyone was on the same page and working in harmony. And let’s not forget, no one enjoys the chaos of miscommunication!

| Lesson | Key Action | Benefit |

|---|---|---|

| Treat it like science | Establish criteria, control variables | Generate objective insights |

| Pilot test prompts | Refine before use | Optimize resource use |

| Strong documentation | Create shared resources | Ensure team consistency |

So, what’s the big takeaway? Evaluate with intention, rehearse to avoid missteps, and don’t underestimate the power of solid documentation. It might feel a bit like herding cats at times, but those lessons bring order to the chaos, leading to results we can all be proud of!

Conclusion

Reflecting on our design process, it’s clear that every challenge presented a unique opportunity for creative problem-solving. Whether it was those unexpected quirks or the thorough guidelines we developed, our experiments taught us invaluable lessons. Remember, design is an ongoing conversation, so let’s keep the dialogue alive. As we refine our approaches and strategies, let’s ensure our creations resonate with users—keeping them engaged and happy with every click. So, here’s to more humorous mishaps and successful designs in the future!

FAQ

-

What was the challenge faced in designing the live training profile page?

The challenge involved ensuring that all course lists and certification progress bars were accurately displayed, likening it to picking the perfect avocado. -

What did the design process for the bulk purchase enterprise plan resemble?

It felt like assembling IKEA furniture without instructions, highlighting the necessity of clarity in design. -

How many phases were involved in evaluating the AI designs?

The evaluation process unfolded over three phases: crafting writing prompts, generating designs with AI, and thoroughly inspecting those designs. -

What was one of the humorous ways used to describe their testing phase?

They compared it to the chaos of a kids’ art class, emphasizing the fun yet disorderly nature of the evaluation. -

What are the categories of AI prototyping tools mentioned in the article?

The categories mentioned are AI-assisted design, AI-assisted (vibe) coding, and general-purpose AI chatbots. -

What is the first prompt for creating a course attendee profile page?

The first prompt is to imagine yourself as a product designer tasked with creating a profile page that showcases all courses taken, downloadable resources, exam statuses, and certification updates. -

What does the redesign challenge for the profile page focus on?

The redesign challenge aims to address clutter, outdated visuals, and low engagement, enhancing the overall user experience. -

What are the main issues identified in the current bulk purchase flow?

The main issues include confusing navigation and outdated visuals that do not reflect a modern interface. -

What are the key criteria for evaluating designs laid out in the article?

The key criteria include goal alignment, concept quality, visual design, and interaction and usability. -

What valuable lesson is highlighted regarding the evaluation process?

One valuable lesson is to treat the evaluation like a science experiment, establishing criteria and controlling variables for objective insights.